第十周根本没时间上课,只能利用第11周的春假补全。

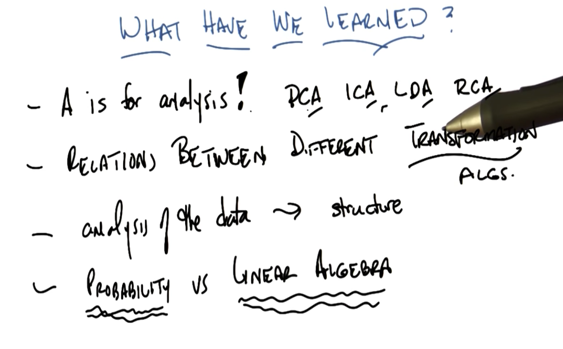

This week: going over Feature Transformation this week, and starting on Information Theory.

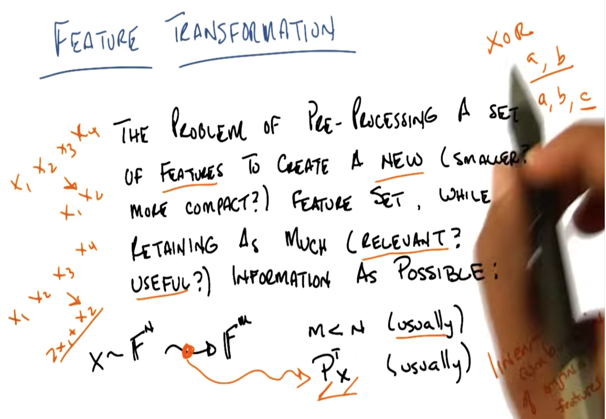

- Feature selection is a subset of feature transformation

- Transformation operator is linear combinations of original features

Why do Feature Transformation

- XOR, Kernel methods, Neural networks already do FT.

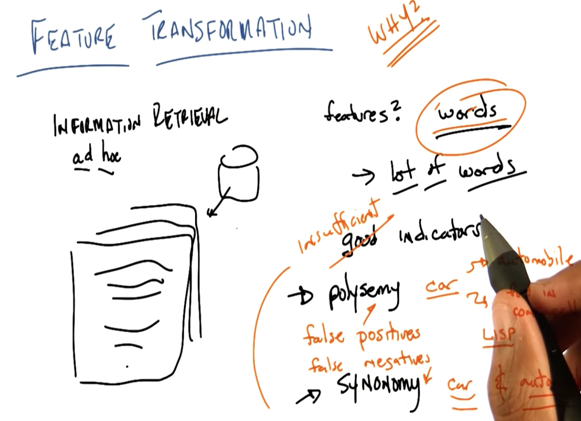

- ad hoc Information Retrieval Problem: finding documents within a corpus that are relevant to an information need specified using a query. (Query is unknown)

- Problems of Information Retrieval:

- Polysemy: e.g. a word have multiple meanings; cause false positive problem

- Synonymy: e.g. a meaning can be expressed by multiple words. can cause false negatives problems.

PCA

This paper does a fantastic job building the intuition and implementation behind PCA

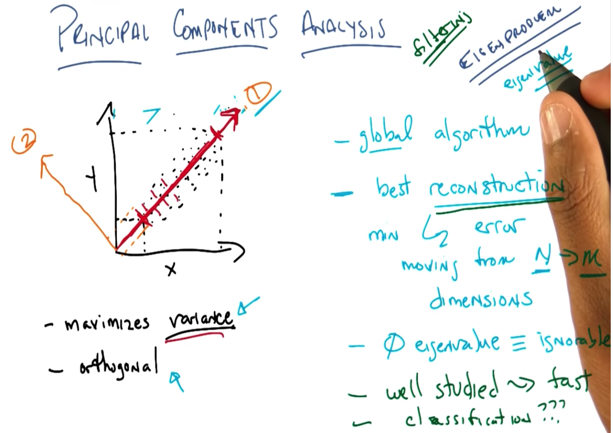

An eigenproblem is a computational problem that can be solved by finding the eigenvalues and/or eigenvectors of a matrix. In PCA, we are analyzing the covariance matrix (see the paper for details)

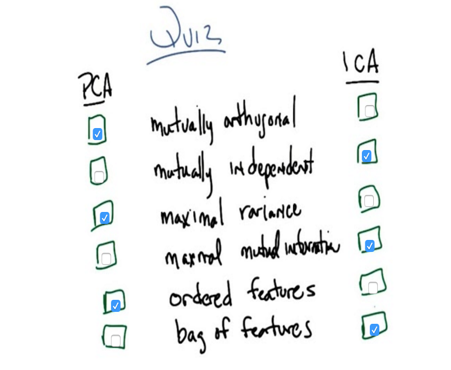

PCA Features

PCA Features

- maximize variance

- mutually orthogonal (every components are perpendicular to each other)

- Global algorithm: the resulted components have a global constraint which is that they must be orthogonal

-

it gives best reconstruction

-

EigenValue monotonically not increasing and 0 eigenvalue = ignorable (irrelevant, maybe not useful).

- It’s well studied and fast to run.

- it’s like a classification. and using a filtering method to select dimensions to use.

- PCA is about finding

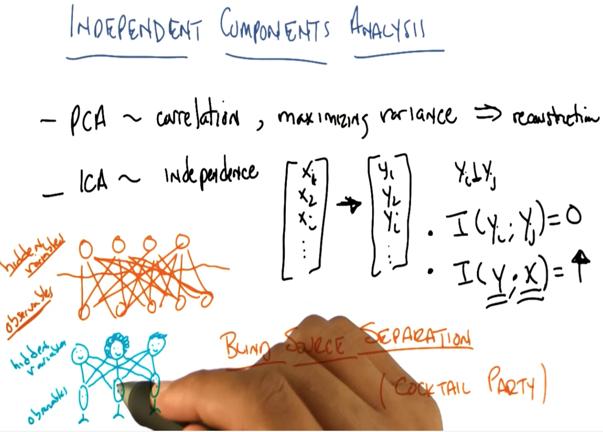

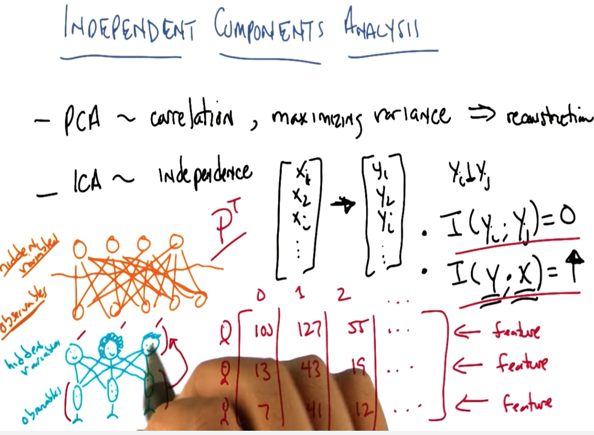

ICA

ICA has also been applied to the information retrieval problem, in a paper written by Charles himself

- find components that are statistically independent from each other using mutual information.

- Designed to solve the blind source separation problem.

- Model: given observables, find hidden variables.

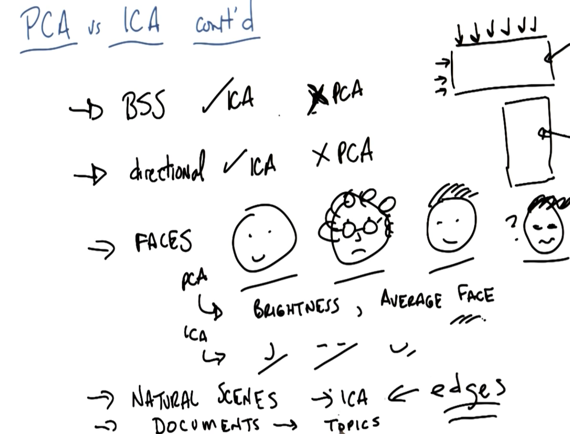

- ICA is more suitable for BSS problems and is directional.

- Eg,

- PCA on faces will separate image based on brightness and average faces. ICA will get features such as nose, mouth etc, which are basic components of a face.

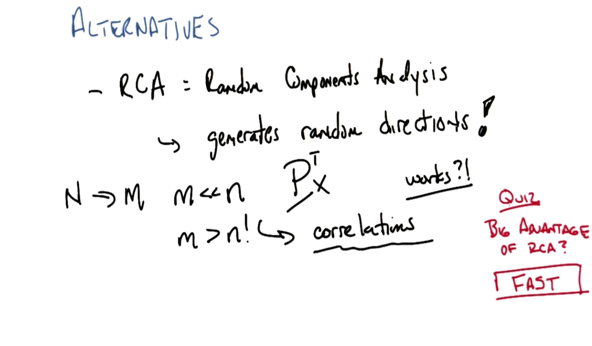

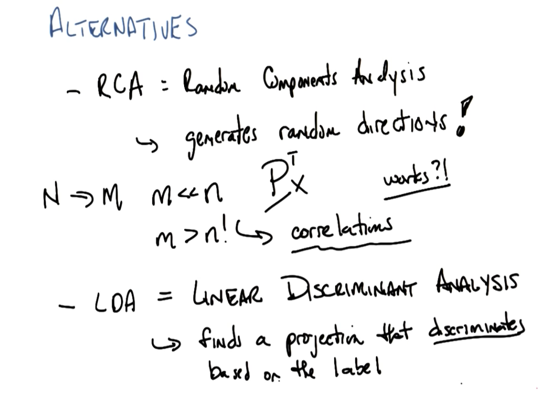

Alternatives:

Random components Analysis: generates random directions

- Can project to smaller dimensions (m « n)but in practice often have more dimensions than PCA.

- Can project to higher dimensions (m > n)

- It works and works very fast.

- Linear Discriminant analysis: find a projection that discriminates based on the label

wrap up

This excellent paper is a great resource for the Feature Transformation methods from this course, and beyond

2016-03-17 初稿

2016-03-26 补完